Performance and Optimisation Strategies for Dynamics Synchronisations

How to make the most out of Data Sync to get the best performance when synchronising data to Dynamics 365 (CRM).

Data Sync is fantastic at getting data in and out of Dynamics, however it is limited by the Dynamics API. When uploading large volumes of data you need to optimise your settings so then you don't hit the Dynamics threshold limits and then get throttled, which will slow your synchronisation to a snails pace.

The following page covers some considerations and tips on how to make the most out of Data Sync and optimise your Dynamics 365 integrations.

Contents

API Limits

The Dynamics / PowerPlatform implements request limits to maintain a reliable service for customers. This is a complex area and is based on the license type and other factors this may also change at any moment due to Microsoft changing the service. You can read more on their current limit allocations within the Microsoft Documentation pages here.

Typically you will see the following errors in the log if your hitting the limits:

RateLimitExceeded

TimeLimitExceeded

ConcurrencyLimitExceeded

Each of these error are a temporary error that occurs when the Dynamics API is throttled. We have built into Data Sync a feature so that it will automatically back off and try the request again up to 5 times.

In general its better to avoid hitting the API limits and use lower batch and thread settings. If you keep hitting the limits then this will be slower overall due to continually having to back off the requests.

Threads & Batch Size

Within the Dynamics connector you can define the number of threads and batch size to use when synchronising the changes.

If you increase the Batch size to be greater than 1 then the requests are sent as ExecuteMultipleRequests messages. You can send a maximum of 1000 requests in a single message. We recommend using a batch size of 10 or less if your using a high thread count otherwise 50 should work well. Using the maximum is generally really slow and will not help your synchronisation performance.

If you increase the Thread count to be greater than 1 then requests will be sent in parallel. If the batch size is larger than 10, alongside an increased thread count, you may see ConcurrencyLimitExceeded errors. This is because by default you can only run two ExecuteMultipleRequests calls at a time unless you contact Microsoft Support and get this limit increased.

A baseline configuration to start with is a thread count of 4 and a batch size of 10. If your not seeing any errors after say 20K records then you can try increasing the thread count.

Real-Time Data

Getting real-time integrations is hard, however you can get very close by using Ouvvi in combination with Data Sync in incremental mode with an appropriate filter and a Trigger to detect the changes. Using this method will typically apply changes to the target within 1 minute.

You would start by creating a project in Ouvvi to hold your Data Sync project(s), then add a Data Sync step and configure the project to do your Synchronisation.

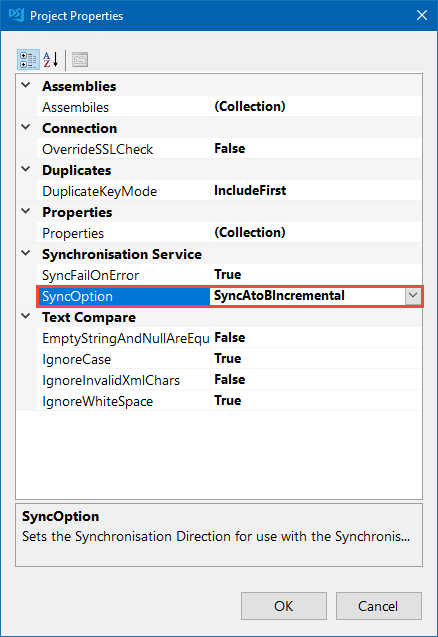

You can then set incremental mode by going to File > Properties > SyncOption and setting the value to SyncAtoBIncremental.

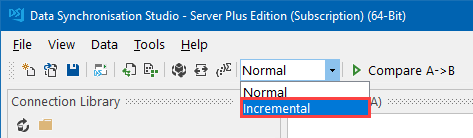

Alternatively you can set incremental mode from the main toolbar.

You would then configure your Data Sync project as normal applying any filters to reduce the datasets being returned, and save your project back to Ouvvi. By reducing the dataset being returned you will speed up the synchronisation thus allowing you to get closer to real-time updates. You could use the modifiedon column from Dynamics to filter for records that were modified today, perhaps within the last hour.

Once your project has been created and saved back to Ouvvi you can configure either a time trigger, to run your project at a regular interval, or you can use an event trigger to run your project when a change is detected e.g. in a Dynamics entity. Event triggers are called every 30 seconds by Ouvvi to check if there have been any changes, this is how you can get close to real-time synchronisations. Every time a change is detected in an entity (the modified timestamp changes) the project will be automatically run.