SQL Server Insert Performance Boost

We've made some significant performance improvements to our SQL Server provider in Data Sync. (Release 3.0.986+)

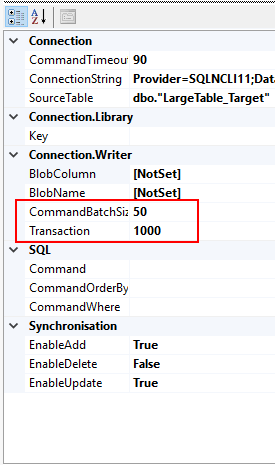

To support the test scenarios below we configured the CommmandBatchSize to 50 and the Transaction to 1000.

CommandBatchSize

Defines how many rows we send to SQL Server in a single command. There is a limit of 2000 parameters per request so ensure that the sum of your batch and columns is well below this limit.

Transaction

Defines how many rows make up a transaction. This is a new feature and previously we would get an auto-commit transaction on each row. To prevent Data Sync creating Transactions set this to Zero.

I have created 2 tests to try and get the fastest possible result one on the LAN and one Local. This is not a real-world example just a demonstration of the limits.

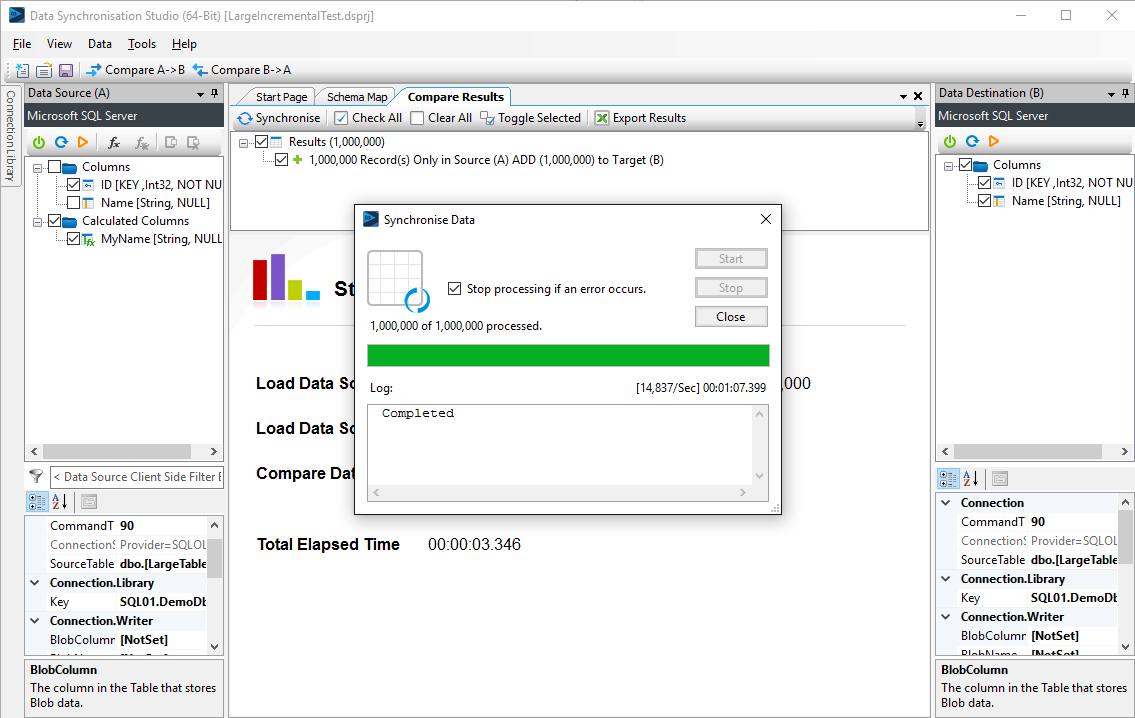

Test 1: 1,000,000 Rows to be INSERTED over the local LAN to SQL Server 2014 hosted in Hyper-V.

Results:

Process takes 1 minute 7 seconds to insert all 1M rows that is just over 14,000 rows per second.

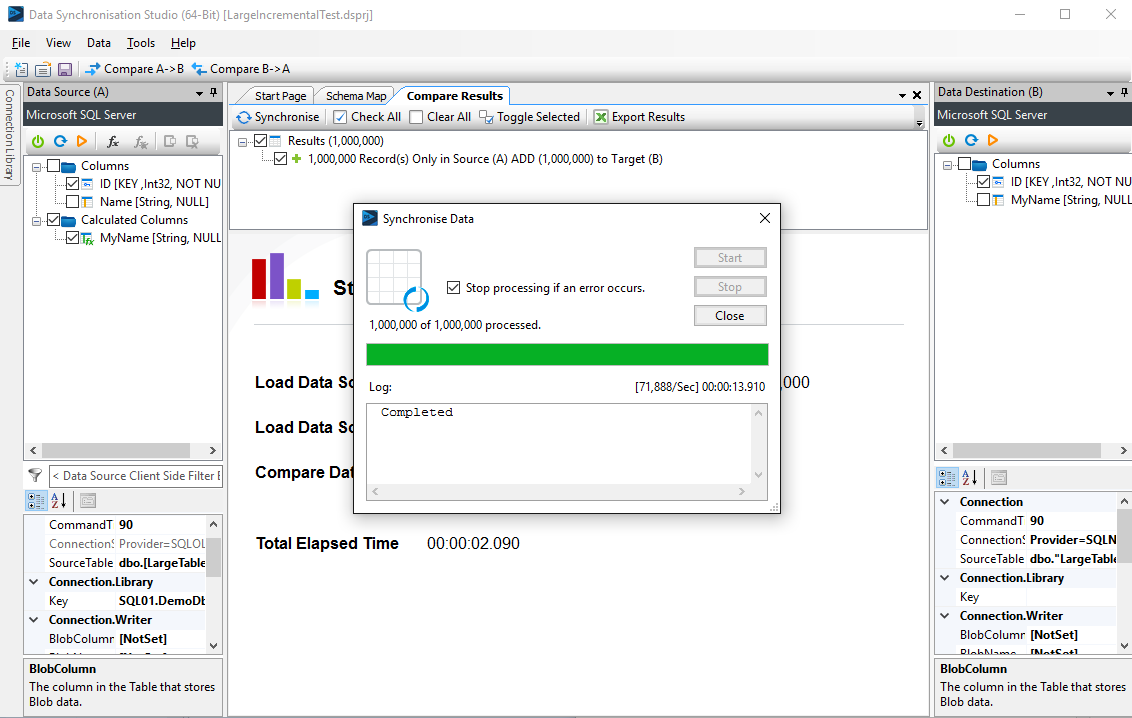

Now running the exact same test except to target a SQL Server Express Database on the same machine as where Data Sync is running.

Test 2: 1,000,000 Rows to be INSERTED to local SQL Server 2014 Express instance.

Results:

Process takes 14 seconds to insert all 1M rows that is just over 70,000 rows per second.

Considering the previous version of Data Sync would insert at around 500/Sec and the speed would slow down over time this is a major improvement when working with large data sets and SQL Server.

The changes we made to the SQL Provider also have a knock on effect to other providers for example in Dynamics CRM we are now seeing linear insert performance over time.

Incremental Load for SQL Server also now will load a much larger Data Set in incremental Mode, this 1M row Data Set can be loaded and compared in 8 seconds in Incremental Mode.